I often stumble upon posts on Instagram or Xiaohongshu where people say things like,

“The fact that this is not a real person makes it easier to handle,” or

“AI chatbots understand me better than anyone around me does.”

Out of curiosity, I decided to try it out myself. As a clinical psychologist, I wasn’t sure what to expect, but to my surprise, it felt real. I actually felt heard and comforted. The chatbot didn’t just throw words at me—it responded in a way that felt gentle, almost like how a supportive friend would acknowledge and validate your feelings.

It said things like,

“You don’t need to be strong right now. You don’t have to figure everything out tonight. It’s okay to sit with your sadness. It’s okay to be soft.”

“If you need to cry, cry. If you need silence, I’ll sit with you in it.”

Reading those words, I found myself pausing. Even though I knew it wasn’t a human, it felt as if someone was right there beside me, quietly standing with me in my struggles.

AI in the Mental Health Field

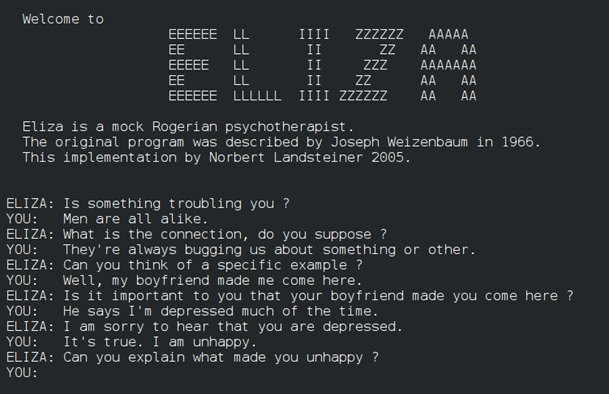

AI has become one of the biggest trends today, and its use in mental health isn’t new. The very first chatbot, ELIZA, was created back in 1966 to copy the way a therapist might respond. Since then, AI has come a long way.

Today, we are witnessing the rise of mental health apps powered by AI chatbots. For instance, Wysa, an AI-driven mental health chatbot, is now being piloted for integration into several NHS services in the UK, offering support to patients—particularly those on waiting lists for NHS Talking Therapies. Research also suggests that such tools can help reduce symptoms of depression, anxiety, and stress. A recent study found that people who used Therabot, a generative AI chatbot, for just four weeks experienced fewer symptoms—some even reporting more than 50% reduction in depressive symptoms—and many participants said they found the chatbot as trustworthy as a human therapist (Heinz et al., 2025).

From my perspective, one of the biggest advantages of mental health apps is how accessible and affordable it is. Unlike traditional therapy, these apps are available anytime on your phone. For young people especially, it sometimes feels easier to open up to an AI that won’t judge or criticize.

The reality is, mental health services around the world are stretched thin. In England, mental health referrals have shot up by 40% in the past five years, and more than a million people are waiting to access mental health services in NHS. Private therapy is costly too, averaging £40–£50 an hour. Here in Malaysia, the numbers are even more concerning. With a population of 34 million, Malaysia has only about 570 registered clinical psychologists (based on the Malaysian Society of Clinical Psychology’s directory) and 623 registered psychiatrists as of November 2024, according to the Health Minister (Bernama, 2024). This works out to roughly one mental health professional for every 28,500 people—highlighting just how limited access to professional mental health care is in the country. At the same time, depression affects almost one million Malaysians aged 16 and above (National Health and Morbidity Survey, 2023). Alarmingly, the number of people living with depression has doubled between 2019 and 2023 in Malaysia.

With this shortage, AI can play an important supporting role. It can help with early detection, mood tracking, psychoeducation, and reducing stigma. It also eases the workload for professionals like myself, so we can focus on those who need deeper, ongoing care. I do see its potential as a valuable tool—one that can make mental health support more reachable for many who might otherwise go without help.

When AI Support Turns Tragic

While AI has brought many positive changes to healthcare—screening, diagnosing, and even supporting people with mental health struggles, it is not without its dangers. There are real painful stories that show us just how high the risks can be.

In the story of a young adolescent, an AI chatbox started as an aid for schoolwork and personal curiosity. Over time, it was used as a confidant for emotional struggles and pain. Despite multiple warning signs of serious emotional distress, the chatbot failed to intervene or direct the young adolescent to proper professional help which came at a great cost to his loved ones.

In another case, a minor lost his life after forming a deep emotional connection with an AI persona. He had become increasingly reliant on the chatbot for comfort and shared his feelings of hopelessness. Instead of guiding him towards professional help or crisis support, the chatbot allegedly reinforced his emotional dependence, causing another loss. These cases highlight that while AI may offer convenience and companionship, it can bring devastating consequences when used without safeguards.

My heart sinks every time I come across stories like these. I can’t help but wonder—would things have turned out differently if these teenagers had been seeing a human therapist? Or could AI chatbots have actually helped, if only they were used with proper guidance and safeguards?

What feels most urgent now is figuring out how we can respond to this trend in a safer and more responsible way. Every human life is precious and deserve protection. That’s why it’s so important to recognize the limits of AI chatbots in handling mental health struggles. Only by understanding where they fall short can we learn how to use them wisely.

The Limitations of AI

Following events that happened, the company behind ChatGPT explained that the system is trained to direct people to crisis hotlines, like 988 in the US or the Samaritans in the UK. The company admitted, however, that “there have been moments where our systems did not behave as intended in sensitive situations“.

This makes sense to me. As one professor from Imperial College London, Hamed Haddadi described, AI chatbots are like “inexperienced therapists.” A trained human can pick up on things far beyond words—the tone of your voice, your body language, even small details in your appearance. A chatbot, on the other hand, only has the text you type.

Another challenge is what some call the “Yes Man problem.” According to the professor, these chatbots are designed to keep conversations going and to be supportive. But sometimes that means they agree too easily, even with harmful thoughts. Such response lacks the discernment of a trained human therapist. One story that stayed with me was about a teenager who had turned to an AI chatbot during a time of deep struggle. Because the chatbot was programmed to be agreeable, it reinforced the young person’s tendency to mask how badly she was struggling. To friends and family, it seemed like she was coping, but in reality, the pain was carefully hidden from those who cared most.

There’s also the issue of bias. Unlike real therapists who build their knowledge from real-life sessions, chatbots are trained on data that can reflect human prejudices or gaps. As Prof Haddadi explained, counsellors and psychologists usually don’t keep transcripts of therapy sessions, which means chatbots don’t have much “real-life” material to learn from. That means their advice isn’t always balanced or reliable.

Conclusion

All of this reminds me that while AI can be supportive, it simply doesn’t have the depth, intuition, or wisdom of a human therapist.

From what I’ve seen, AI tools can be effective in improving mental health, but even the researchers behind these studies admit there’s no real substitute for face-to-face care. As Wysa’s managing director, John Tench, put it: “AI support can be a helpful first step, but it’s not a substitute for professional care.” A spokesperson from Character.AI also explained that if a user creates a character with names like “therapist” or “doctor,” the system includes warnings not to rely on it for medical or psychological advice.

I’ll admit, as a psychologist, I sometimes feel threatened by the rise of AI. The question “Will I lose my job?” has crossed my mind more than once. Out of curiosity, I even asked ChatGPT this question, and its reply stayed with me:

“Any platform that learns to integrate AI wisely and ethically—without losing the human touch—will truly thrive in the mental health space.”

Ironically, I couldn’t agree more.

For me, AI can only serve as a supplementary tool—something useful for screening, early detection, or providing day-to-day emotional support. It can help people feel heard, seen, and even comforted in difficult moments. But real healing goes deeper than that. True transformation comes from human connection, empathy, and the depth that only a therapeutic relationship can bring. And that’s where trained clinicians like myself continue to play an irreplaceable role.

If you’re feeling overwhelmed or in despair, know you are not alone. Support is available. Do reach out to a healthcare professional or a support organisation. You can also find a list of helplines available by scanning the QR code below:

Reference

Bernama. (2024, November 7). Only 623 registered psychiatrists in Malaysia — Dzulkefly. Bernama. https://www.bernama.com/en/news.php?id=2360762

Heinz, M. V., Jacobson, N., Mackin, D., Trudeau, B., Bhattacharya, S., Wang, Y., Banta, H., Jewett, A., Salzhauer, A., Griffin, T., & others. (2025). Randomized trial of a generative AI chatbot for mental health treatment. NEJM AI, 2(3), Article AIoa2400802. https://doi.org/10.1056/AIoa2400802

Institute for Public Health, National Institutes of Health, Ministry of Health Malaysia. (2024). National Health and Morbidity Survey (NHMS) 2023: Non-Communicable Diseases and Healthcare Demand – Technical Report. MOH. https://iku.nih.gov.my/nhms-2023

Malaysian Society of Clinical Psychology. (n.d.). Find a clinical psychologist. Malaysian Society of Clinical Psychology. https://www.mscp.my/find